deleted by creator

The worst kind of an Internet-herpaderp. Internet-urpo pahimmasta päästä.

deleted by creator

I only did so because I had installed proton-ge. You know, “when all you have is a hammer, every problem looks like a nail” -type of thing. :D

Running Galaxy with proton-ge. Sure, it doesn’t install linux versions of games or anything, but it works.

Basically what I did was:

proton gog-galaxy-installer.exe to install. It installs to ~/.local/share/proton-pfx/0/pfx/drive_c/Program Files/GOG Galaxy (or somesuch)Seems to work fine, some older version of proton-ge and/or nvidia driver under wayland made the client bit sluggish, but that has fixed itself. Games like Cyberpunk work fine. The galaxy overlay doesn’t, though.

I don’t play TF2, but I thought basically all hl2 -family games were updated to 64bit ages ago… apparently this wasn’t the case :o

Any of the other games running the same engine still in 32bit land?

amd cpu, but nvidia gpu, so as far as I’d understand, not using ACO then?

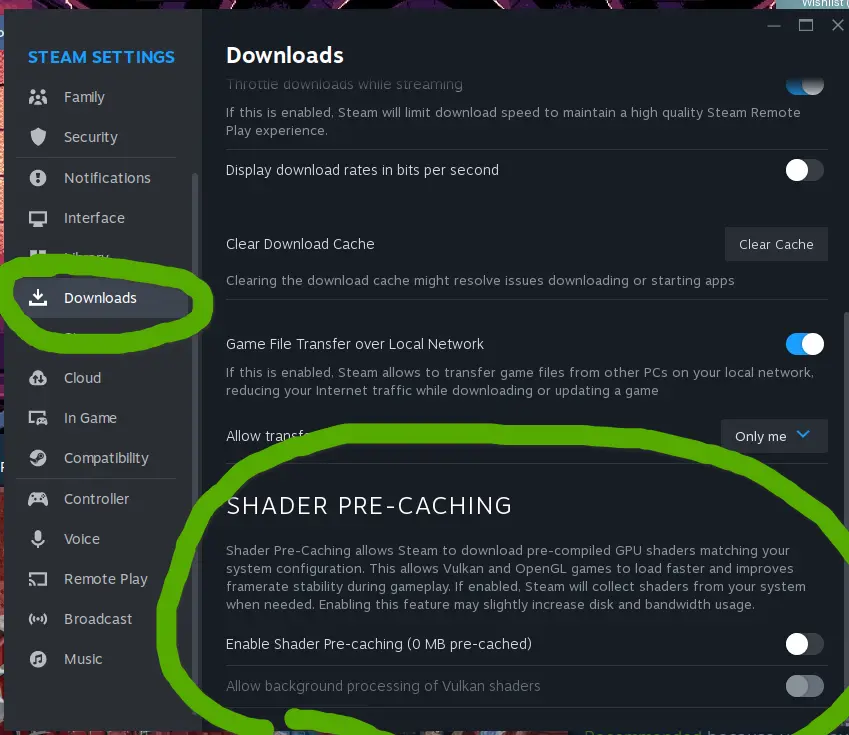

yep, I’m aware. I just haven’t observed* any compilation stutters - so in that sense I’d rather keep it off and save the few minutes (give or take) on launch

*Now, I’m sure the stutters are there and/or the games I’ve recently played on linux haven’t been susceptible to them, but the tradeoff is worth it for me either way.

well, I do have this one game I’ve tried to play, Enshrouded, it does do the shader compilation on it’s own, in-game. The compiled shaders seem to persist between launches, reboots, etc, but not driver/game updates. So it stands to reason they are cached somewhere. As for where, not a clue.

And since if it’s the game doing the compilation, I would assume non-steam games can do it too. Why wouldn’t they?

But, ultimately, I don’t know - just saying these are my observations and assumptions based on those. :P

turning it off will wipe the cached shaders. That cleaned up like ~40 GB (IIRC) for me, without any noticeable difference in performance, stability or smoothness. Though my set of games at the time wasn’t all that big: path of exile, subnautica: below zero, portal 2 and some random smaller games.

Overall I’m still getting used to the Steam “processing vulkan shaders” pretty much every time a game updates, but it’s worth it for the extra performance.

That can be turned off, though. Haven’t noticed much of a difference after doing so (though, I am a filthy nvidia-user). Also saving quite a bit of disk space while too.

doesn’t seem like there’s any assets in there (textures, music, sounds, videos…), just the code, so the footprint of it is fairly small just because of this.

Exactly that. Awesome and thanks.

Now, aggressive waiting for the updates begins

am I correct to assume this is the thing that’ll fix eg. Steam going full seizure mode under wayland + nvidia?

It’s not even limited to nvidia, I also get it on intel igpu (i5-2320, ancient, I know).

On one hand, I’d assume Valve knows what they’re doing, but also setting the value that high seems like it’s effectively removing the guardrail alltogether. Is that safe, also what is the worst that can happen if an app starts using maps in the billions?

Hastily read around in the related issue-threads and seems like on it’s own the vm.max_map_count doesn’t do much… as long as apps behave. It’s some sort of “guard rail” which prevents processes of getting too many “maps”. Still kinda unclear what these maps are and what happens is a process gets to have excessive amounts.

That said: https://access.redhat.com/solutions/99913

According to kernel-doc/Documentation/sysctl/vm.txt:

- This file contains the maximum number of memory map areas a process may have. Memory map areas are used as a side-effect of calling malloc, directly by mmap and mprotect, and also when loading shared libraries.

- While most applications need less than a thousand maps, certain programs, particularly malloc debuggers, may consume lots of them, e.g., up to one or two maps per allocation.

- The default value is 65530.

- Lowering the value can lead to problematic application behavior because the system will return out of memory errors when a process reaches the limit. The upside of lowering this limit is that it can free up lowmem for other kernel uses.

- Raising the limit may increase the memory consumption on the server. There is no immediate consumption of the memory, as this will be used only when the software requests, but it can allow a larger application footprint on the server.

So, on the risk of higher memory usage, application can go wroom-wroom? That’s my takeaway from this.

edit: ofc. I pasted the wrong link first. derrr.

edit: Suse’s documentation has some info about the effects of this setting: https://www.suse.com/support/kb/doc/?id=000016692

I’m kinda amazed it wasn’t DOOM again. Damn cool project!

the bin and cue files are a cd/dvd image. IIRC you can’t mount those directly, but you can convert them to iso with

bin2iso(there are probably other tools too)iso file you can mount something like

mount -o loop /path/to/my-iso-image.iso /mnt/isoand then pull the files out from there.As for directly pulling files out from bin/cue… dunno.